Feed [1, 2, 3] into a standard neural network, then feed [3, 2, 1]. You get different outputs. But for sorting or finding a maximum, these inputs represent the same set. The network shouldn't care about order.

Why Sutskever Included This

Sequence-to-sequence models assume input order carries meaning. For graph processing, point clouds, and molecular structures, that assumption fails. This paper extends neural sequence models to handle unordered data.

The Permutation Problem

Consider sorting. Given input {5, 2, 8}, the correct output is [2, 5, 8] regardless of how you present the input. Feed a standard LSTM [5, 2, 8] during training, and it learns that pattern. But at test time, if you provide [8, 5, 2], performance collapses. The model memorized spurious correlations between position and value.

Mathematically, a function f is permutation invariant if f(x₁, x₂, ..., xₙ) = f(xπ(1), xπ(2), ..., xπ(n)) for any permutation π. Standard RNNs violate this property. The hidden state after processing [A, B] differs from the state after [B, A].

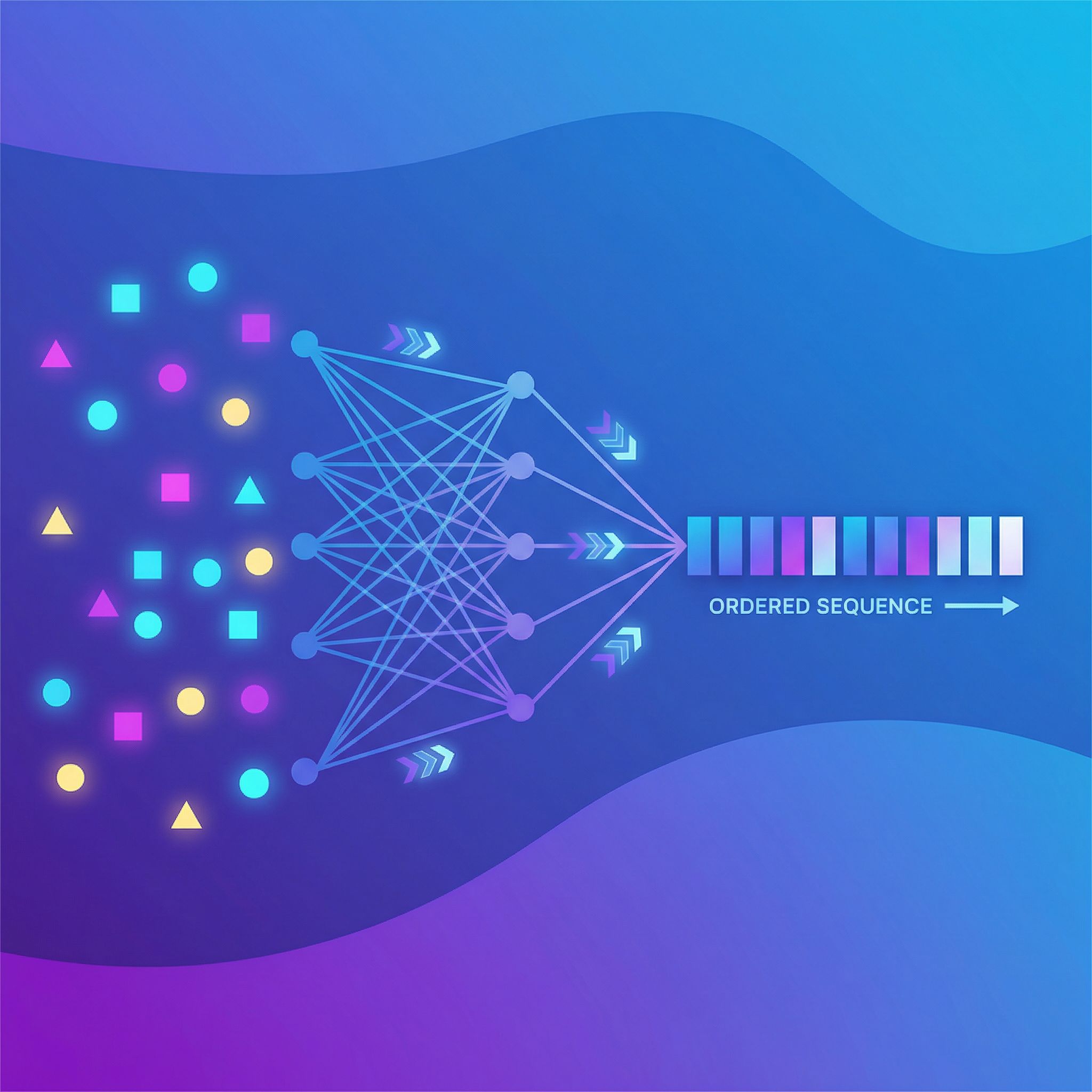

Read-Process-Write

The solution has three stages:

Read: Encode each set element independently. No information flows between elements yet, so no ordering bias creeps in.

Process: Combine encodings using a permutation-invariant operation. Sum, mean, and max pooling all work because a + b = b + a. The result is identical regardless of input order.

Write: Decode from the pooled representation to produce an ordered output sequence. Attention lets the decoder focus on relevant elements when generating each output token.

Input: {5, 2, 8} (any order)

Read: encode(5), encode(2), encode(8)

Process: pool([e₅, e₂, e₈]) → single vector

Write: decode → [2, 5, 8]

Pooling Strategies

The paper explores several ways to combine element encodings:

Sum/Mean pooling: Add encodings together or average them. Works when all elements contribute equally.

Max pooling: Take element-wise maximum across encodings. Useful when extreme values matter.

Attention pooling: Learn weights for each element based on a query vector. More expressive, heavier to compute.

Each operation commutes with permutations. Shuffle inputs, pool output stays identical.

Why Attention Still Works

The decoder generates ordered output from unordered input. When generating output token t, it attends to set elements, focusing on whichever is relevant for position t.

For sorting, when generating the first output token, the decoder attends most to the minimum element. For the second token, the second-smallest. Attention weights encode the ordering logic.

Experiments

The paper tests on sorting sequences of numbers. Standard seq2seq, trained on inputs in one order, fails when test inputs arrive in different orders. Accuracy drops from near-perfect to random.

The set-aware model maintains accuracy regardless of input permutation. The architecture encodes the correct inductive bias: sets are unordered.

Beyond Sorting

The architecture applies wherever input order is arbitrary.

Point clouds: 3D points have no natural ordering. Set-based processing handles any arrangement.

Graph nodes: Graphs can be represented with nodes in any order. Set processing ignores the arbitrary choice.

Multi-object scenes: The order you list detected objects shouldn't affect scene understanding.

Connection to Transformers

Self-attention computes weighted sums over elements, a permutation-invariant operation. Positional encodings add order when needed, but attention itself treats positions symmetrically.

Paper #13 (Attention Is All You Need) builds the transformer architecture on related principles.

Why This Matters

Neural networks are function approximators. When the target function has structure like permutation invariance, encoding that structure directly works better than hoping the network discovers it from data. Less data, faster training, better generalization.

The same logic applies broadly: identify your problem's symmetries and build them into the architecture.

Further Reading

More in This Series

Part of a series on Ilya Sutskever's recommended 30 papers, connecting each to practical AI development.