Sequence-to-sequence models generate outputs from a fixed vocabulary. But what if the output needs to reference positions in the input? Vinyals, Fortunato, and Jaitly's 2015 paper repurposed the attention mechanism to solve this: instead of blending encoder states, use attention weights as a probability distribution over input positions.

Why Sutskever Included This

Pointer Networks opened a new class of problems to neural network solutions. Before this paper, combinatorial optimization problems with variable-length outputs couldn't be addressed by seq2seq models. The paper's acknowledgments thank Sutskever for useful discussions, and co-author Oriol Vinyals worked closely with Sutskever at Google Brain.

The Variable Dictionary Problem

Consider sorting a sequence of numbers. The input might be [3, 1, 4, 1, 5]. The output should be the indices that sort it: [1, 3, 0, 2, 4] (pointing to positions in the original sequence).

A standard seq2seq model can't do this. The output vocabulary would need to include all possible indices, but the number of indices depends on input length. A model trained on sequences of length 5 couldn't handle sequences of length 10.

The same problem appears in convex hull computation and the Travelling Salesman Problem. The output is a sequence of pointers to input points, and the number of points varies.

Standard Attention vs Pointer Attention

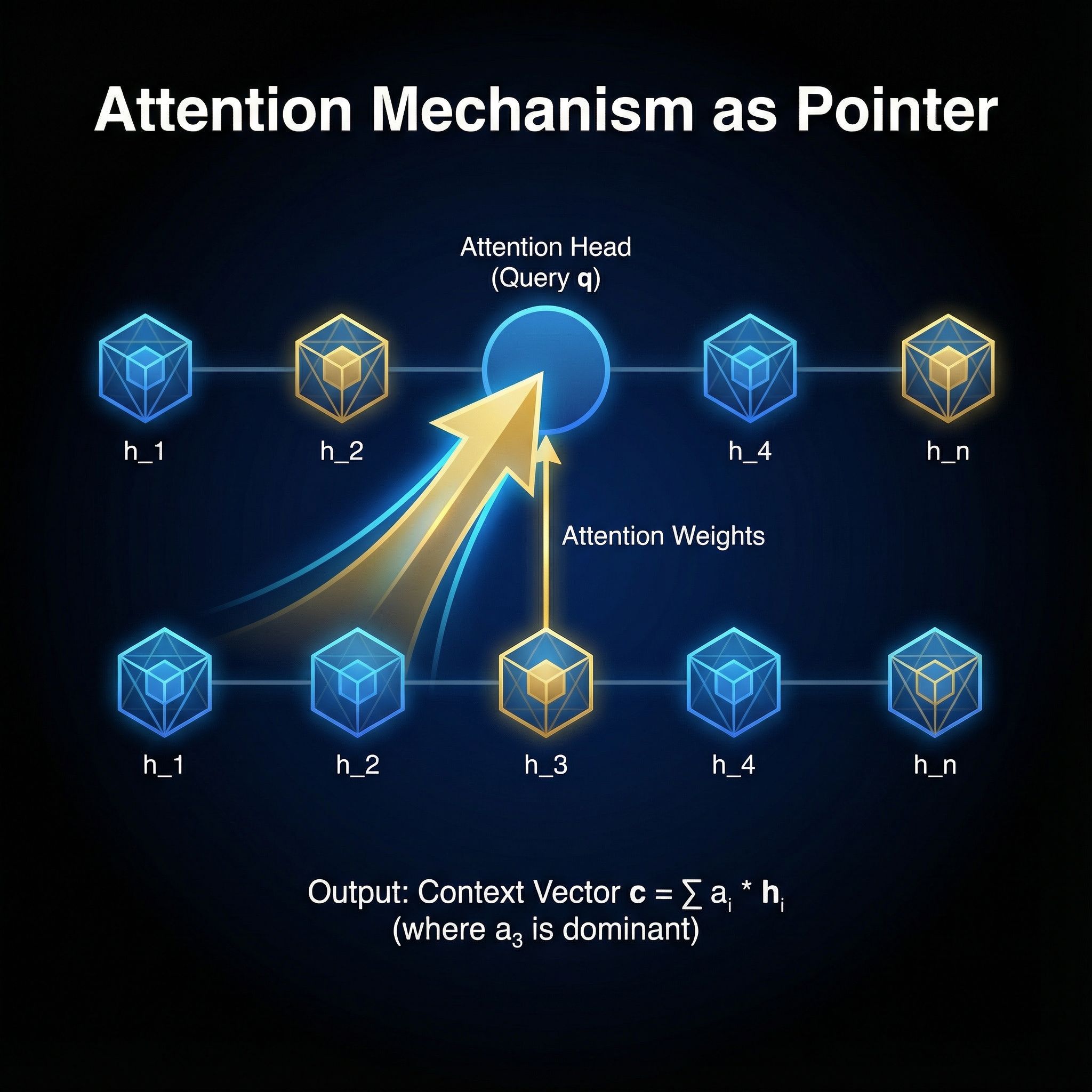

In standard attention, the decoder queries the encoder at each step. Attention weights determine how much each encoder state contributes to a context vector. This context vector then helps predict the next output token from a fixed vocabulary.

# Standard attention

weights = softmax(score(decoder_state, encoder_states))

context = sum(weights * encoder_states)

output = predict_from_vocabulary(context)

# Pointer attention

weights = softmax(score(decoder_state, encoder_states))

output = argmax(weights) # directly select input position

Pointer Networks skip the context vector and vocabulary prediction. The attention weights themselves become the output distribution. The position with highest weight is the selected input element.

The Architecture

The encoder is a standard LSTM that processes the input sequence, producing hidden states for each position. The decoder is also an LSTM, but instead of predicting tokens, it produces query vectors.

At each decoder step, the query vector is compared against all encoder hidden states using a learned scoring function. The softmax of these scores gives a probability distribution over input positions. Training maximizes the probability of the correct position sequence.

The scoring function is simple: a feedforward layer that takes the concatenated decoder and encoder states, applies tanh, then projects to a scalar. This matches the Bahdanau attention mechanism but interprets the output differently.

Solving Combinatorial Problems

The paper demonstrates Pointer Networks on geometric problems. For convex hulls, given a set of 2D points, the network outputs the subset that forms the hull boundary by pointing to vertices in sequence. For the Travelling Salesman Problem, given cities, it outputs an ordering that minimizes total distance. The network doesn't find optimal TSP solutions but produces reasonable approximations, trained on examples solved by conventional algorithms.

Generalization Beyond Training Length

Networks trained on sequences of length n generalize to longer sequences. A model trained on 50-city TSP instances produces valid (though suboptimal) tours for 100-city instances. The attention mechanism scales naturally because it compares the decoder state against however many encoder states exist.

This contrasts with fixed-vocabulary models, which can't even attempt inputs longer than their vocabulary size.

Why This Matters for Modern AI

Pointer Networks established that attention can serve as a selection mechanism, not just a weighting scheme. This idea appears throughout modern architectures.

Copy mechanisms in language models let models copy words directly from input rather than regenerating from vocabulary, improving accuracy on rare words and proper nouns. Extractive summarization works similarly: instead of generating summaries word by word, models point to sentences in the source document. Program synthesis uses pointer mechanisms to select variable names from context rather than hallucinating identifiers.

Connection to Transformers

Transformer attention computes pairwise interactions between all positions. In self-attention, each position attends to all others. This can be viewed as every position having "pointers" to every other position, with learned weights determining which pointers matter.

Cross-attention in encoder-decoder transformers closely resembles Pointer Networks. The decoder queries the encoder, and the attention weights indicate which encoder positions are relevant. The difference is that transformers typically use these weights to blend representations rather than select positions directly.

Some transformer variants, particularly for structured prediction tasks, use pointer-style attention as an output mechanism, combining the representational power of transformers with the selection capability of Pointer Networks.

The Broader Lesson

Attention was designed for one purpose (improving seq2seq with long sequences) and repurposed for another (selection from variable-length inputs). This kind of mechanism reuse appears repeatedly in deep learning. Dropout was regularization; it became approximate Bayesian inference. Batch normalization was training stabilization; it became a form of regularization. Architectural components often have uses beyond their original design.

Further Reading

More in This Series

Part of a series on Ilya Sutskever's recommended 30 papers, connecting each to practical AI development.