Molecules, social networks, knowledge graphs, 3D meshes. Many datasets have inherent graph structure: nodes connected by edges. Standard neural networks expect fixed-size grids or sequences. Graph neural networks operate directly on graphs of any shape.

Why Sutskever Included This

Graph-structured data is everywhere. Drug discovery, recommendation systems, chip design, physics simulation. The message passing framework unifies how neural networks process these diverse problems. GNNs extend deep learning beyond images, text, and audio to arbitrary relational structures.

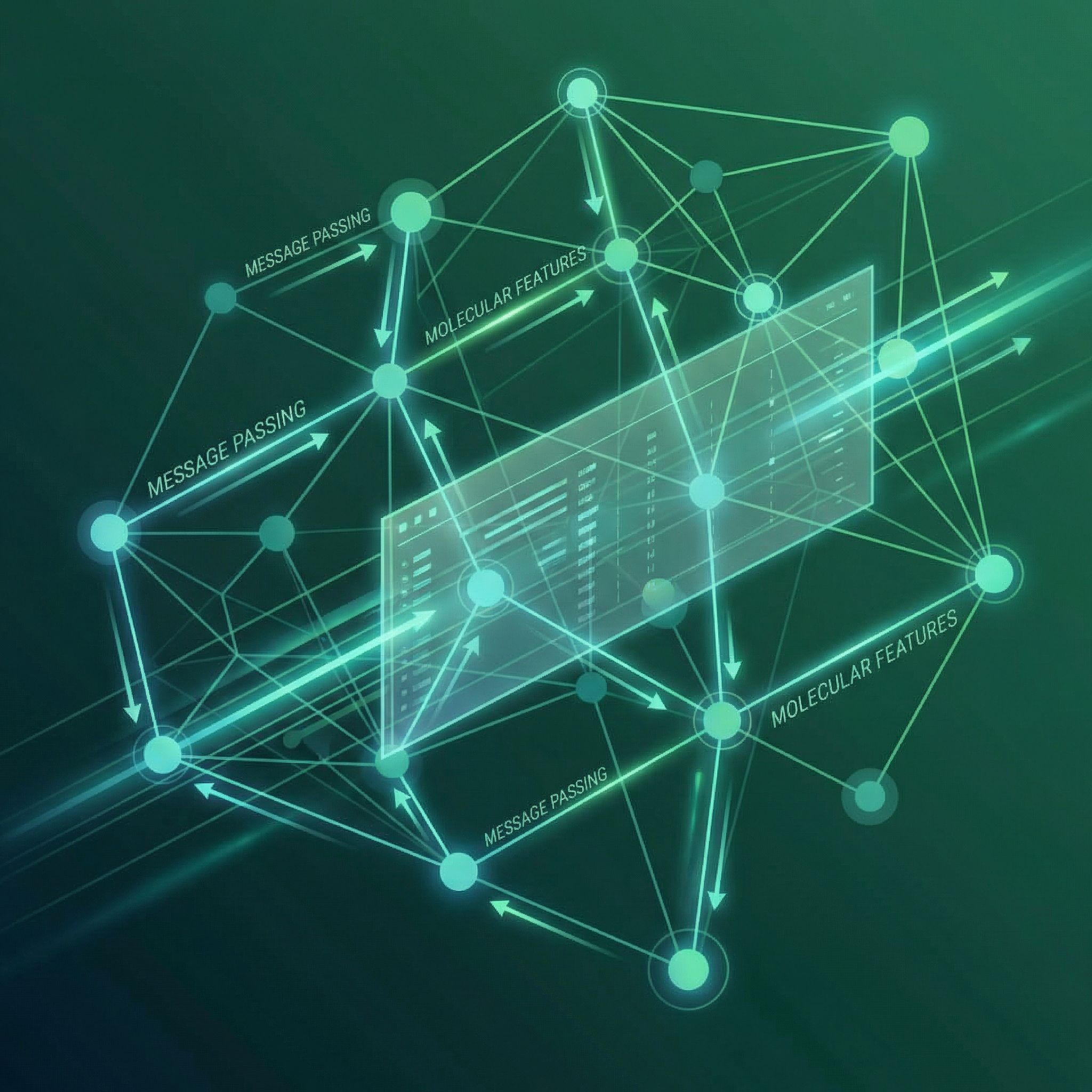

The Message Passing Framework

Each node has a feature vector. Processing happens in rounds:

Message: For each edge, compute a message based on the connected nodes and edge features.

Aggregate: Each node collects messages from all neighbors. Use a permutation-invariant function: sum, mean, or max.

Update: Combine the aggregated message with the node's current state to produce a new representation.

m_v = Aggregate({Message(h_u, h_v, e_uv) : u ∈ Neighbors(v)})

h_v' = Update(h_v, m_v)

Repeat for T rounds. Each round expands the receptive field by one hop. After T rounds, each node's representation incorporates information from nodes T edges away.

Graph-Level Predictions

Sometimes you need one output for the whole graph (is this molecule toxic?). After message passing, pool all node representations into a single vector. Sum pooling, mean pooling, or attention-based pooling all work.

This readout function must be permutation invariant. The graph has no canonical node ordering; the output shouldn't depend on how you list the nodes.

Molecular Property Prediction

Atoms are nodes. Bonds are edges. Atom type and charge become node features. Bond type (single, double, aromatic) becomes edge features. Message passing lets atoms influence each other through the molecular structure.

After several rounds, each atom's representation encodes its chemical environment. Pool these into a molecule-level prediction: solubility, toxicity, binding affinity.

Common Architectures

GCN (Graph Convolutional Network): Simplest variant. Aggregates neighbor features with degree normalization. Fast but limited expressiveness.

GraphSAGE: Samples fixed-size neighborhoods for scalability. Works on large graphs where full neighborhood aggregation is expensive.

GAT (Graph Attention Network): Learns attention weights over neighbors. Different neighbors contribute differently to the update.

GIN (Graph Isomorphism Network): Maximally expressive for distinguishing graph structures. Theoretically grounded in the Weisfeiler-Leman graph isomorphism test.

Limitations

Over-smoothing: With many layers, all node representations converge to similar values. Information diffuses too broadly. Most GNNs use 2-4 layers.

Expressiveness: Standard message passing can't distinguish certain non-isomorphic graphs. Higher-order GNNs exist but are more expensive.

Scalability: Neighborhood aggregation on large graphs is memory-intensive. Mini-batching requires careful subgraph sampling.

Beyond Molecules

Social networks: Users are nodes, friendships are edges. Predict user interests from network structure.

Knowledge graphs: Entities are nodes, relations are typed edges. Learn embeddings for link prediction.

3D point clouds: Build graphs from spatial proximity. Process LiDAR data for autonomous driving.

Chip design: Circuit components are nodes, wires are edges. Optimize placement and routing.

Connection to Paper #8

The aggregation step in GNNs connects to paper #8 (Seq2Seq for Sets). Both use permutation-invariant pooling to handle unordered inputs. A node's neighbors form a set; the aggregation function treats them symmetrically.

Further Reading

More in This Series

Part of a series on Ilya Sutskever's recommended 30 papers.