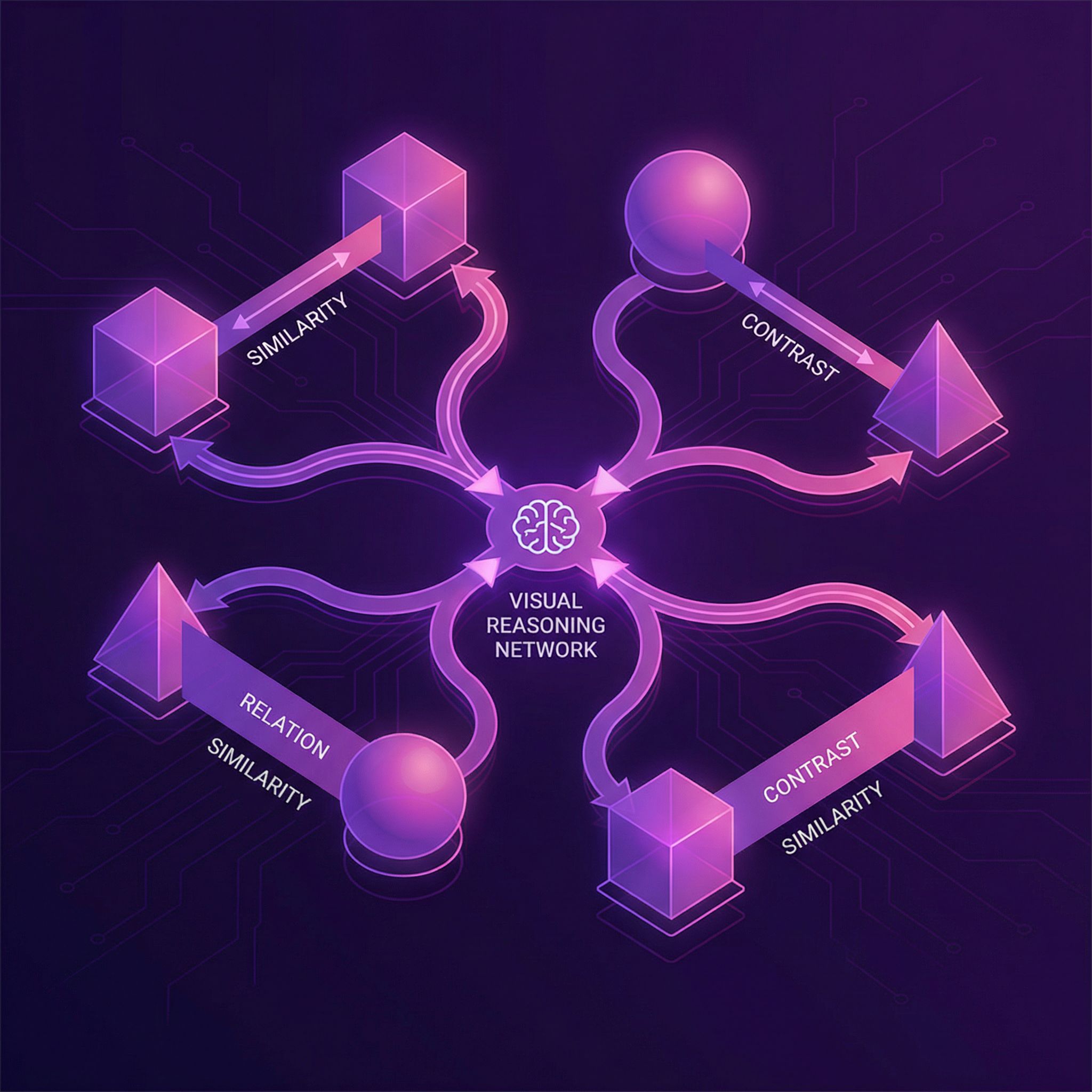

"Is the blue sphere larger than the red cube?" Answering requires comparing two objects. Standard CNNs process images holistically, missing explicit object comparisons. Relation Networks compute features for every pair of objects, enabling direct relational reasoning.

Why Sutskever Included This

Reasoning about relationships is central to intelligence. "A is left of B" and "B is larger than C" require comparing entities, not just recognizing them individually. Relation Networks provide a simple, effective module for this capability.

The Relational Bottleneck

CNNs excel at recognizing objects: "there's a red cube." But relational questions require comparing objects: "is the red cube left of the blue sphere?" Standard architectures must learn such comparisons implicitly through many layers.

On the CLEVR visual reasoning benchmark, CNNs with 4 residual blocks achieved 63% accuracy on relational questions. Non-relational questions (color, shape identification) were much easier at 95%+.

Explicit Pairwise Computation

Relation Networks make comparison explicit. Given objects o₁, o₂, ..., oₙ:

RN(O) = f( Σᵢⱼ g(oᵢ, oⱼ, q) )

g computes relational features for each pair (with question q). f aggregates all pair features into the final answer.

Every pair gets compared. The network can learn that "left of" depends on x-coordinates, "larger than" depends on size. Explicit comparison makes relational learning easier.

Object Representations

Objects can come from any source. For images, divide CNN feature maps into spatial locations; each location is an "object." For text, each word embedding is an object. The relation module is agnostic to object origin.

This modularity lets relation networks plug into existing architectures. Add an RN layer to your CNN for relational reasoning without redesigning the whole system.

CLEVR Results

On CLEVR, Relation Networks achieved 95.5% accuracy, compared to 68% for ResNet + LSTM baselines. The gap was largest on relational questions, where RNs reached 93% vs 63% for baselines.

The architecture provides the right inductive bias: compare objects pairwise. Given this structure, learning the specific relations becomes tractable.

Quadratic Complexity

For n objects, RNs compute n² pair comparisons. With 100 objects, that's 10,000 relation computations. Complexity limits scalability to scenes with many objects.

Sparse variants (only compare nearby objects) and attention-based selection (learn which pairs matter) address this. But the quadratic base case works well for moderate object counts.

Connection to Other Papers

Paper #8 (Seq2Seq for Sets) uses permutation-invariant aggregation. Relation Networks aggregate pairwise features the same way: sum over pairs, then process. The permutation invariance ensures consistent answers regardless of object ordering.

Paper #12 (Graph Neural Networks) generalizes this to sparse graphs. GNNs compare only connected nodes; RNs compare all pairs. For dense relational reasoning, RNs work; for structured graphs, GNNs are more efficient.

Further Reading

More in This Series

Part of a series on Ilya Sutskever's recommended 30 papers.