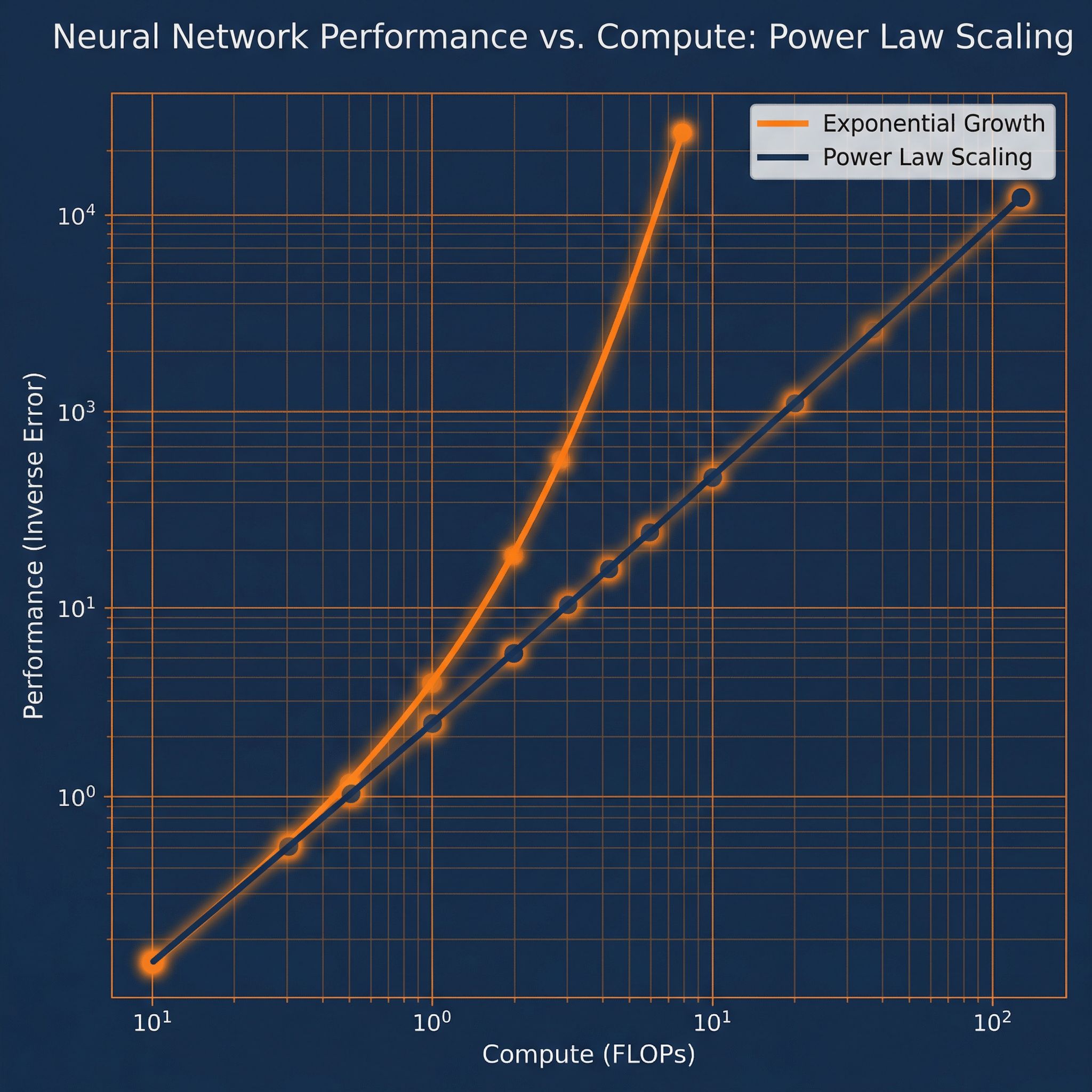

Double your compute budget. How much does performance improve? Scaling laws give a precise answer: loss follows power laws in parameters, data, and compute. These empirical regularities predict performance across seven orders of magnitude and guide billion-dollar training decisions.

Why Sutskever Included This

Scaling laws transformed AI development from art to engineering. Before these findings, researchers couldn't predict whether a 10x larger model would be worth the cost. Now they can forecast performance before training, allocate resources optimally, and plan multi-year research agendas with quantitative confidence.

The Power Laws

Three relationships govern transformer performance:

Loss vs Parameters: L(N) ∝ N^(-0.076)

Loss vs Data: L(D) ∝ D^(-0.095)

Loss vs Compute: L(C) ∝ C^(-0.050)

The exponents are small but consistent. Doubling parameters reduces loss by about 5%. Doubling data reduces it by about 6.5%. These gains compound over many doublings.

Predictability

The remarkable finding: these laws hold across seven orders of magnitude. Models from 768 parameters to 1.5 billion follow the same curves. This lets researchers extrapolate from small experiments to predict large-scale outcomes.

Before investing $100M in training, run $100K worth of experiments. Fit the scaling curve. Predict the result. The predictions work.

Compute-Optimal Training

Given a fixed compute budget, how should you split resources between model size and training data? The original OpenAI paper suggested: make models as large as possible, even if undertrained.

The Chinchilla paper revised this. For optimal performance per compute, scale model size and data together:

Optimal: N ∝ C^0.5 and D ∝ C^0.5

Model size and data should grow at the same rate as compute increases.

This means GPT-3 was undertrained for its size. Chinchilla, with fewer parameters but more training data, outperformed it. The finding shifted industry practice toward longer training on more data.

Implications for AI Development

Predictable progress: Given compute growth projections, we can forecast model capabilities years ahead. The exponential growth of compute translates to steady improvement.

Data bottlenecks: As models grow, they need proportionally more data. High-quality data may become the limiting factor before compute does.

Efficient scaling: Don't over-parameterize. Balance model size with training data. Larger isn't automatically better if training is insufficient.

What Scaling Laws Don't Predict

Power laws predict loss (perplexity). They don't directly predict downstream task performance or emergent capabilities. A model with 10% lower perplexity might be dramatically better at reasoning, or might not be.

The relationship between loss and capabilities remains an active research question. Scaling laws tell you how well a model predicts text; they don't tell you if it can write poetry.

Connection to Other Papers

Paper #9 (GPipe) enables training the large models these laws describe. Paper #13 (Transformers) provides the architecture. Together, they explain why companies invest billions in training larger language models: the returns are predictable.

Further Reading

More in This Series

Part of a series on Ilya Sutskever's recommended 30 papers.