Standard language models predict one token at a time. Multi-token prediction trains models to forecast several future tokens simultaneously. This improves sample efficiency, creates better representations, and enables faster inference through speculative decoding.

Why Sutskever Included This

Next-token prediction is the dominant training objective, but it's not the only option. Multi-token prediction extracts more learning signal per example and may produce representations that capture deeper structure. The technique also enables practical speedups.

The Architecture

A shared backbone (transformer) processes the input. Multiple output heads predict different future positions: head 1 predicts the next token, head 2 predicts two tokens ahead, and so on.

Loss = -log P(w_t+1|w_1:t) - log P(w_t+2|w_1:t) - log P(w_t+3|w_1:t)

Each head contributes to the total loss. The shared backbone must learn representations useful for all prediction horizons.

Sample Efficiency

Each training example provides N learning signals instead of one. Models trained with multi-token prediction achieve comparable performance using roughly 1/3 of the training data.

The constraint of predicting multiple tokens forces the model to encode longer-term structure rather than memorizing local patterns.

Better Representations

Predicting only the next token can be satisfied by shallow pattern matching. Predicting multiple tokens ahead requires understanding deeper dependencies.

The shared backbone must compute features useful for both immediate and distant predictions. This constraint may improve generalization.

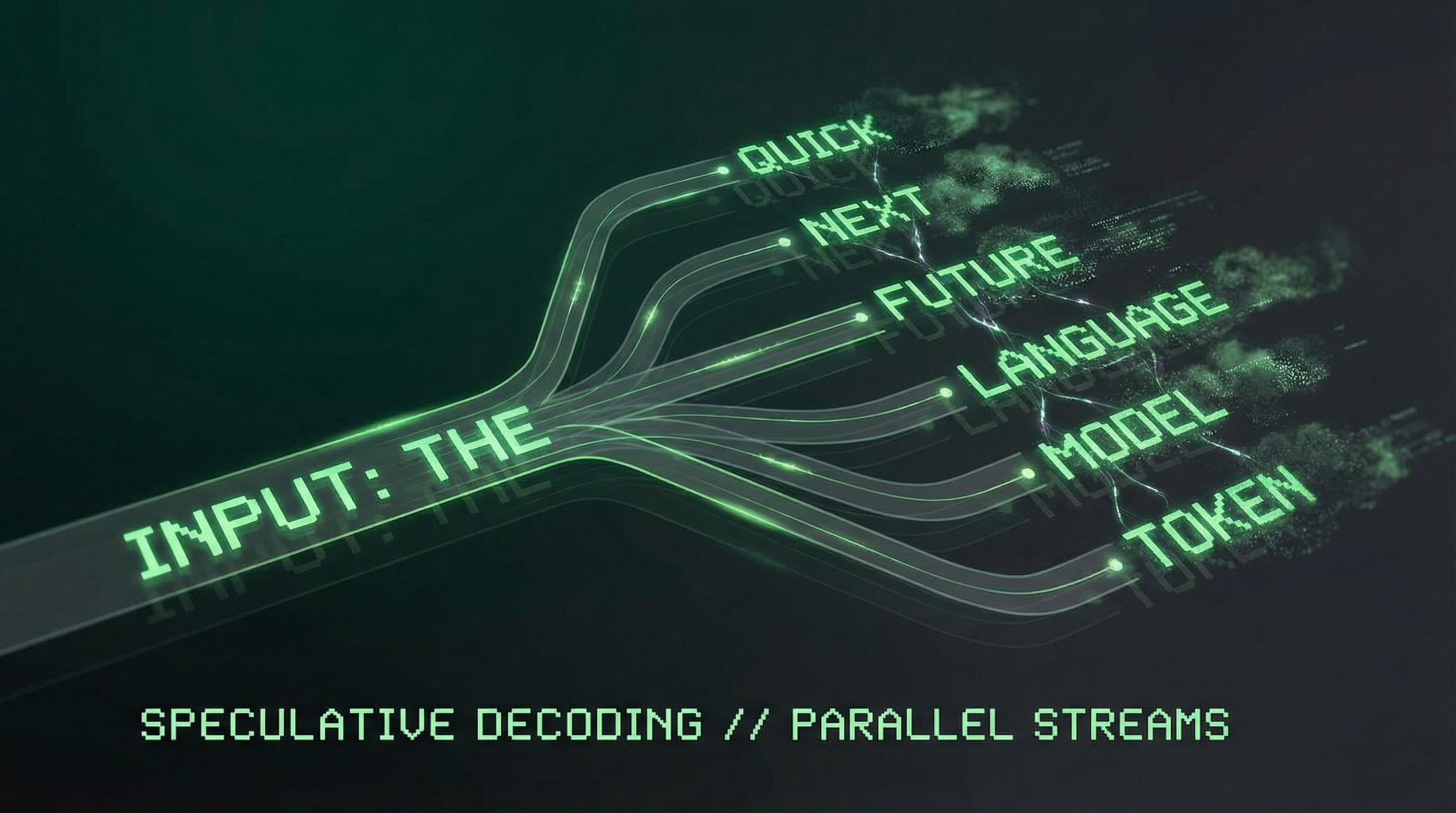

Speculative Decoding

At inference, multi-token prediction enables speculative decoding. The model generates several candidate tokens in one forward pass. A verifier (potentially the same model) checks whether these match what autoregressive generation would produce.

When speculation succeeds, multiple tokens are generated per forward pass, yielding up to 3x speedup in practice.

Minimal Overhead

The architecture shares embeddings and transformer layers across all predictions. Only small linear output heads are duplicated. The additional compute cost is modest; the efficiency gains are substantial.

Further Reading

More in This Series

Part of a series on Ilya Sutskever's recommended 30 papers.