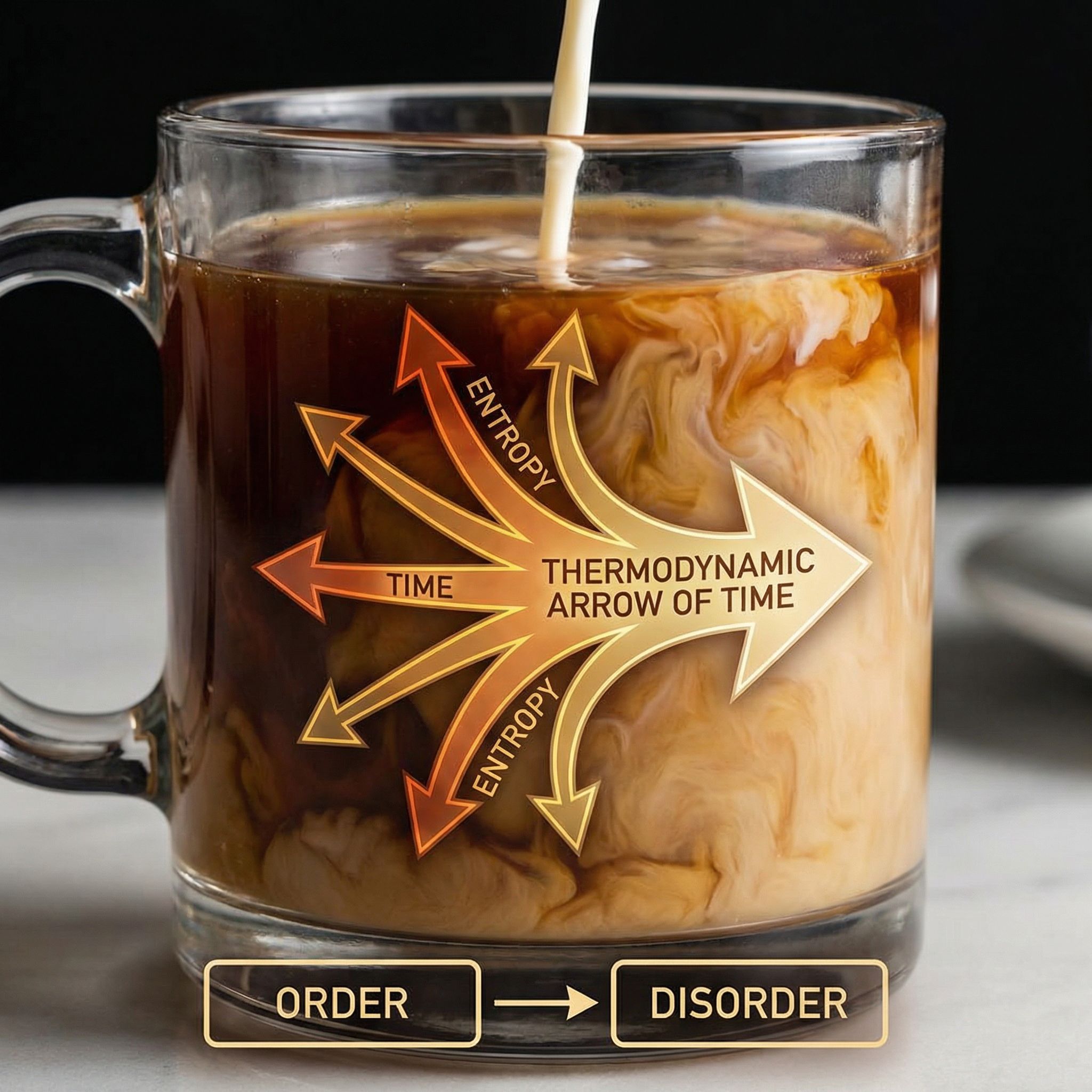

Pour cream into coffee. It mixes. Wait forever. It never unmixes. But the laws of physics are time-symmetric: every collision is reversible. Where does irreversibility come from? The answer connects thermodynamics to information theory to machine learning.

Why Sutskever Included This

Understanding irreversibility illuminates learning itself. Neural networks compress data irreversibly, discarding details to extract patterns. Information theory, thermodynamics, and computation share deep connections. This paper explores those foundations.

The Paradox

Molecular collisions are reversible. Film a collision and play it backward; the reversed motion also follows Newton's laws. Yet macroscopic processes have a direction. Coffee cools, it doesn't spontaneously heat. Eggs break, they don't unbreak.

The laws don't change. Something else creates the asymmetry.

Entropy and Microstates

Entropy counts microstates. A "mixed" coffee has astronomical numbers of molecular arrangements that all look uniformly brown. An "unmixed" coffee with cream on top has far fewer. Entropy measures this: S = k log W, where W is the number of microstates compatible with the macrostate.

Random motion explores microstates uniformly. Most microstates correspond to high-entropy macrostates. The system drifts toward what's typical, and typical means mixed.

Coarse-Graining

We don't track individual molecules. We observe macroscopic properties: temperature, color, concentration. This coarse-graining is where irreversibility enters.

Microscopically, the dynamics preserve information (Liouville's theorem). Macroscopically, information about precise molecular positions is lost. The coarse-grained description sees entropy increase because it ignores the fine details that would distinguish reversible from irreversible paths.

Poincaré Recurrence

Given infinite time, any finite system returns arbitrarily close to its initial state. The coffee will unmix, eventually. But "eventually" means approximately e^(10²³) seconds. The universe is about 10¹⁷ seconds old. We'll never see it happen.

Irreversibility is practical, not fundamental. The timescales are just too vast.

The Arrow of Time

Why do we remember the past but not the future? Why does entropy increase forward in time? The answer traces to initial conditions: the Big Bang started with extremely low entropy. All apparent time-asymmetry inherits from this cosmological boundary condition.

The laws don't prefer a direction. The universe's initial state does.

Landauer's Principle

Erasing information has a thermodynamic cost. To reset a bit requires dissipating at least kT ln 2 of energy. Computation that discards information must generate heat.

Neural networks compress data massively. This compression is irreversible: you can't recover the training images from the model weights. The information bottleneck that enables generalization is thermodynamically connected to entropy increase.

Connection to Learning

Good models compress data well. They capture patterns and discard noise. This compression parallels mixing: details are lost, structure remains. Understanding irreversibility helps understand why compression works and what's preserved.

Paper #23 (MDL) and Paper #25 (Kolmogorov Complexity) develop these connections further.

Further Reading

More in This Series

Part of a series on Ilya Sutskever's recommended 30 papers.